The Transformation of Digital Infrastructure into Industrial Powerhouses

The global data center industry is currently undergoing a radical metamorphosis, moving from the quiet background of the digital economy to the very forefront of global industrial strategy. For several decades, these facilities were viewed as mere support systems—digital plumbing that facilitated the flow of internal emails and basic credit card transactions. Today, however, the explosive rise of generative artificial intelligence has catalyzed a shift so profound that economists and technologists frequently compare it to a second Industrial Revolution. This transformation is not merely about adding more storage or faster processors; it represents a fundamental change in how the world consumes energy, utilizes land, and manages the electrical grid on a planetary scale.

The purpose of this timeline is to trace the evolution of data centers from localized utility rooms to the gigawatt-scale supercomputing hubs of today. By examining the transition through distinct technological eras, one can understand how the current AI boom is reshaping the global economy. This topic is of critical importance today because the massive energy and capital requirements of AI-centric infrastructure are testing the limits of existing utility frameworks and forcing a total reimagining of how societal infrastructure is funded and sustained for future generations. As these facilities grow into the massive physical anchors of the modern age, they transition from invisible servers to the most significant industrial drivers of the twenty-first century.

From Server Closets to Gigawatt Hubs: A Chronological Evolution

1990 – 2010: The Era of Internal IT and Server Closets

During the early years of the digital age, data centers were largely localized and modest in scope, serving as the digital heart of individual companies rather than global networks. Often referred to as “server closets,” these facilities were typically tucked away in office basements or dedicated rooms within corporate headquarters. Their primary function was to support internal business operations, such as managing accounting software, human resources databases, and basic internal email systems. At this stage, the infrastructure was reactive, built solely to handle the specific administrative needs of a single organization.

During this period, power requirements were measured in small increments of kilowatts or low megawatts, and the environmental footprint of these operations was negligible to the general public. There was no need for massive cooling towers or dedicated power substations, as the heat generated by a few dozen racks of servers could be managed by standard building air conditioning. The digital world was a supplementary tool for business, and the infrastructure reflected that secondary status, remaining hidden and relatively inexpensive to maintain.

2010 – 2023: The Rise of the Mobile and Cloud Era

As smartphones became ubiquitous and streaming services replaced physical media, the industry entered the era of the “hyperscaler.” Companies like Amazon, Google, and Microsoft began building massive cloud-computing facilities to centralize the internet’s processing power and provide on-demand services to billions of users. These facilities, ranging from 10 to 100 megawatts, allowed for the explosion of social media, video-on-demand, and software-as-a-service models. This era marked the first time that data center development became a significant driver of real estate and construction trends in specific geographic hubs.

While these data centers were significantly larger than the server closets of the previous decade, they remained relatively discreet and functioned primarily as centralized warehouses for the world’s digital data. They were the engines of the consumer internet, processing photos, videos, and search queries. The primary goal was latency—ensuring that a user in a major city could access their data instantly. However, even at this scale, the facilities were still largely seen as “digital warehouses” rather than heavy industrial plants, and their impact on the local power grid was manageable within existing utility planning cycles.

2024 – Present: The Dawn of the AI and Gigawatt Era

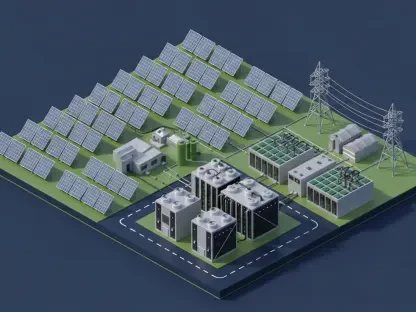

The current period marks a radical departure from simple data storage toward active AI training and inference, which requires a completely different level of computational intensity. Modern data centers have evolved into sophisticated supercomputer hubs that require an unprecedented scale of energy consumption to power the high-density chips necessary for machine learning. We are now seeing the rise of gigawatt-level projects—energy demands previously reserved for entire major cities or heavy industrial manufacturing zones like steel mills or automotive factories.

This era is defined by staggering capital investments, with individual projects frequently exceeding $10 billion in construction and hardware costs alone. The aggressive development pace is another hallmark of this era, where facilities reach full operational capacity within months rather than years to meet the insatiable demand for AI capabilities. This is no longer just an evolution of the cloud; it is the creation of a new category of industrial infrastructure that sits at the intersection of high technology and heavy utility demand.

Analyzing the Turning Points and Shifting Industry Standards

The most significant turning point in this evolution is the transition from energy “headroom” to near-total grid utilization. In previous eras, data centers typically reserved more power than they actually used, providing a buffer that allowed for fluctuations in the electrical grid. In the AI era, facilities are utilizing upwards of 95% of every megawatt allocated to them, running at maximum capacity around the clock to process complex algorithms. This shift has turned data centers into “large load” entities that challenge the stability of major power interconnections like ERCOT in Texas and PJM in the Eastern United States.

These shifts have highlighted notable gaps in current regulatory frameworks that were never intended to handle such rapid industrial growth. Most electrical grids were designed for a slow-growth environment where residential needs and small commercial developments were the primary priority. The rapid influx of tech-driven industrial demand has created a friction point between “legacy loads”—the everyday citizens and small businesses—and the massive capital reserves of Big Tech firms. As the industry moves forward, the primary area for future exploration will be the development of fair cost-sharing models that allow for industrial growth without placing a disproportionate financial burden on the general public.

Regional Friction, Innovation, and the Social Reality of Growth

The expansion of AI infrastructure is not occurring without significant regional and social friction as the physical reality of these buildings clashes with local communities. In many areas, developers are moving into rural or unincorporated regions to avoid the strict regulations and high land costs of urban centers. This migration has led to a surge in community opposition as residents grapple with the sudden arrival of massive industrial neighbors. Between 2024 and 2025, grassroots protests regarding water consumption for cooling and the loss of rural land resulted in delays and cancellations totaling over $64 billion for the industry.

Furthermore, there is a common misconception that data centers are “invisible” entities with little impact on local economies beyond property taxes. In reality, they are becoming the primary drivers of utility policy and grid management. Expert opinions suggest that we are currently in a “transition year” for rulemaking, where the decisions made by grid operators will dictate energy costs for the next decade. As emerging innovations like liquid cooling and small modular nuclear reactors are explored to meet energy needs, the industry remains in a volatile state of hyper-growth. Ultimately, the AI data center boom is a balancing act between the pursuit of technological dominance and the practical realities of environmental and social sustainability.

The history of data center development demonstrated that infrastructure must eventually answer to the physical constraints of the world. As the industry moved from simple closets to city-sized hubs, it encountered the limits of existing power grids and public patience. Future strategies emphasized the adoption of onsite power generation and more transparent community engagement to mitigate the $64 billion in lost opportunities seen during the peak of the transition. Stakeholders recognized that the next phase required a move toward sustainable integration, ensuring that the march of artificial intelligence did not come at the expense of local resource stability. Experts recommended that policymakers prioritize modular energy solutions and updated cost-allocation models to sustain this new industrial age.