The rapid integration of machine learning into the machinery of local government has transformed once-static bureaucratic processes into dynamic, data-responsive systems that operate with unprecedented speed. AI-driven assessment tools represent more than just a software update; they are a fundamental reimagining of how public value is measured and how administrative decisions are reached. This review examines the shift from manual oversight to automated precision, exploring how these systems redefine the relationship between the state and the citizen through a lens of efficiency and algorithmic accountability.

The transition toward these tools was born out of a critical need to handle massive datasets that human departments could no longer process in real-time. By moving beyond simple spreadsheets, New York counties have adopted sophisticated platforms capable of analyzing property values, infrastructure needs, and socioeconomic trends simultaneously. This evolution is rooted in the principle of predictive governance, where the goal is not merely to react to current conditions but to anticipate shifts in the community landscape before they manifest as crises.

Evolution and Core Principles of Automated Public Evaluations

The journey toward automated evaluations began with the digitization of records, but the true breakthrough occurred when these records were linked to neural networks. Unlike traditional methods that relied on periodic manual audits, current systems utilize continuous data streams to provide a living map of jurisdictional health. This approach minimizes human bias in valuations, ensuring that assessments are based on objective market indicators and historical patterns rather than subjective interpretations.

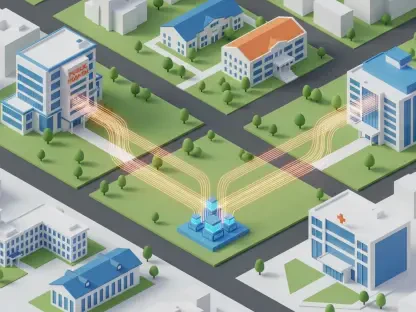

Furthermore, the relevance of this technology in the modern landscape cannot be overstated, as it sets a benchmark for “Smart City” initiatives globally. By integrating disparate data points—ranging from environmental sensors to transaction records—the system creates a holistic view of the public sector. This interconnectedness allows for a more agile response to economic fluctuations, positioning local governments to be as technologically competitive as the private sector industries they regulate.

Technical Architecture and Feature Breakdown

Automated Administrative Algorithms: Precision in Practice

At the heart of these tools lies a suite of administrative algorithms designed to execute complex calculations at a scale previously thought impossible. These algorithms utilize regression analysis and spatial modeling to determine property assessments and resource distribution. By processing variables like proximity to transit and local inflation rates, the system produces results that are statistically more consistent than those generated by individual assessors. This consistency is the primary selling point for counties looking to reduce litigation over perceived unfairness in tax or zoning decisions.

However, the performance of these algorithms is heavily dependent on the quality of the training data. If the underlying information is skewed by historical inequities, the machine learning models risk codifying those biases into the future. To combat this, modern implementations include “de-biasing” layers that scan for patterns of systemic exclusion. This unique feature differentiates the current generation of tools from earlier, more rigid iterations, offering a level of self-correction that is essential for maintaining public trust.

Data Governance and Privacy Frameworks: The Digital Shield

Because these systems handle sensitive information, a robust governance framework is integrated directly into the technical architecture. This involves a tiered access model where “Strictly Necessary” data for site functionality is isolated from “Targeting” or “Performance” data. By utilizing advanced encryption and decentralized storage, the tools ensure that while the government can see the aggregate trends, the individual identity of the citizen remains protected under a shroud of anonymization.

This privacy-first design is a direct response to the requirements of the CCPA and other evolving regulations. It provides users with granular agency, allowing them to opt out of non-essential data collection without losing access to public services. This balance between data utility and individual rights represents a significant departure from the “all-or-nothing” approach of early digital governance, making these tools a more palatable option for a public that is increasingly wary of the surveillance state.

Emerging Trends in Digital Transparency and User Agency

One of the most compelling trends in this sector is the rise of explainable AI (XAI), which seeks to pull back the curtain on how automated decisions are made. Instead of presenting a “black box” output, newer modules provide a clear rationale for every assessment, detailing the specific factors that influenced the final number. This move toward transparency is not just a technical upgrade but a shift in the philosophy of governance, prioritizing the citizen’s right to understand the logic of the state.

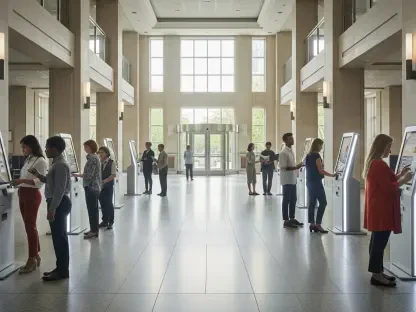

Moreover, we are seeing a shift toward “User Agency” where residents can interact with their own data profiles through intuitive dashboards. These platforms allow individuals to correct inaccuracies in real-time, effectively crowdsourcing data validation. This collaborative model reduces the administrative burden on government staff while empowering the community to take an active role in the accuracy of their public records.

Real-World Applications in New York Counties and Beyond

In various New York counties, these tools have already been deployed to modernize property tax assessments, leading to a noticeable decrease in appraisal wait times. For instance, some jurisdictions have reported that what once took months of manual labor is now accomplished in days, allowing officials to focus on high-level strategy rather than clerical data entry. This real-world implementation serves as a proof of concept for other states looking to trim the fat from their administrative budgets.

Beyond property, the technology is finding a home in public health and infrastructure management. By analyzing traffic patterns and hospital admission rates, counties can predict where new services will be needed most. This proactive deployment of resources demonstrates the versatility of AI tools; they are not limited to financial assessments but serve as a multi-tool for general governance, capable of adapting to the unique needs of both urban centers and rural districts.

Challenges in Data Security and Ethical Oversight

Despite the benefits, significant hurdles remain, particularly regarding the ethical oversight of automated systems. The risk of “algorithmic drift,” where a model becomes less accurate over time due to changing external conditions, requires constant human vigilance. Governments must establish permanent oversight committees to audit these systems regularly, ensuring that the software does not become a silent dictator of public policy.

Technical bottlenecks also persist in the form of legacy system integration. Many local governments still operate on outdated databases that do not easily communicate with modern AI APIs. Bridging this gap requires substantial capital investment and technical expertise, which can be a barrier to entry for smaller or less-funded counties. The challenge lies in ensuring that the digital divide does not result in a two-tier system of governance where only wealthy areas benefit from algorithmic efficiency.

The Future of Smart Governance and Integrated AI

The trajectory of this technology points toward a fully integrated ecosystem where AI does not just assist but optimizes every facet of public life. We are moving toward a reality where “Living Assessments” reflect real-time economic shifts, potentially eliminating the shock of annual tax adjustments. The long-term impact will likely be a more stable and predictable fiscal environment for both the government and the taxpayer.

Future breakthroughs in quantum computing and edge processing will further enhance these capabilities, allowing for even more complex simulations of urban growth and environmental impact. As these tools become more sophisticated, the role of the public servant will transition from a data processor to a data interpreter, focusing on the ethical implications and human elements that a machine can never fully grasp.

Final Assessment of AI-Driven Assessment Integration

The review of these AI-driven tools demonstrated that the shift toward automated governance was both inevitable and largely beneficial for operational efficiency. It was clear that the successful implementations were those that prioritized transparency and data privacy over raw processing power. While the technical hurdles of legacy integration and the ethical risks of bias were significant, the proactive measures taken by many New York counties provided a viable roadmap for mitigation. The transition succeeded in making the machinery of the state more responsive to the needs of its citizens.

Ultimately, the verdict was that AI-driven assessment tools were a transformative force in the public sector. To move forward, policymakers should have focused on standardizing ethical audit protocols and investing in workforce retraining to handle the shift in administrative roles. The integration of these tools did not replace human judgment but rather provided a more stable foundation upon which that judgment could be exercised. Those who embraced the balance between innovation and oversight positioned themselves at the forefront of the next generation of smart governance.