The silent hallways of municipal buildings often stand in stark contrast to the buzzing server rooms of Silicon Valley, creating a persistent gap that leaves critical public services tethered to legacy systems while the private sector races toward an automated future. This technological disparity is not merely a matter of convenience; it represents a fundamental barrier to efficiency, equity, and the effective distribution of taxpayer-funded resources. While corporate giants can afford to fail fast and iterate, public agencies operate under a microscope of accountability where a single technical glitch can disrupt the lives of thousands of vulnerable residents. Consequently, the adoption of modern tools like artificial intelligence has historically been viewed with a mix of curiosity and deep-seated apprehension by local government administrators.

Why Are Local Governments Traditionally the Last to Embrace the Digital Revolution?

The hesitation within local government to adopt cutting-edge technology stems from a culture deeply rooted in the necessity of risk mitigation. Unlike a startup that might prioritize rapid growth over stability, a municipal agency manages essential functions such as emergency response, public health, and social safety nets. In these environments, the “move fast and break things” philosophy is not just impractical; it is potentially catastrophic. When the stakes involve the distribution of food stamps or the management of unemployment benefits, there is no room for the experimental errors that define the early stages of technological implementation. This inherent risk aversion creates a natural resistance to any tool that has not been exhaustively vetted and proven over decades of use.

Furthermore, integrating advanced technology into public infrastructure often feels like a herculean feat due to the rigid nature of administrative budgets. Public funding is typically earmarked for specific, immediate needs, leaving very little room for the research and development cycles required to implement artificial intelligence. For many jurisdictions, the choice is between hiring an additional caseworker or investing in a software platform that might not show results for several years. This zero-sum game often forces agencies to stick with antiquated, manual processes that are inefficient but familiar. Overcoming this barrier requires a fundamental shift in leadership, moving from a state of digital apprehension to one where administrators feel empowered and informed enough to make strategic investments in modernization.

Bridging the Technological Divide Between the Private and Public Sectors

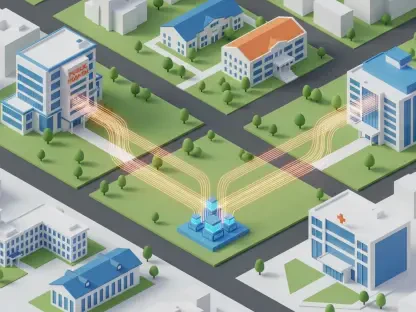

To address these systemic hurdles, the emergence of the AI Learning and Innovation Hub has acted as a strategic intervention, designed specifically to navigate the unique constraints of the public sector. This initiative, spearheaded by the nonprofit organization Social Finance in partnership with HumanServices.ai, provides a structured environment where government leaders can explore the potential of artificial intelligence without the fear of public failure. By operating as a collaborative bridge, the hub facilitates a dialogue between the technologists who build these tools and the civil servants who understand the nuances of public policy. This partnership ensures that the technology is not forced upon agencies but is instead tailored to solve specific, real-world administrative challenges.

The long-term sustainability of this innovation is anchored by a $6 million grant from the GitLab Foundation, which allows the program to expand its reach and provide ongoing support to various cohorts. This financial backing is crucial because it removes the immediate burden of cost from the participating agencies, allowing them to focus on the educational and implementation aspects of the technology. The hub essentially creates a “sandbox” environment—a protected space for low-stakes experimentation and testing. Within this framework, agencies can prototype AI-driven solutions, test them against historical data, and refine their parameters before they ever interact with a live resident inquiry or a critical benefit application.

The Mechanics of Collaborative Innovation: Shared Tools and Resources

One of the most transformative elements of this initiative is the creation of a decentralized Prompt Library, which serves as a repository for successful AI “recipes” developed by peer agencies. In the traditional procurement model, each city or county would have to independently vet external vendors, negotiate individual contracts, and build solutions from the ground up—a process that is both redundant and expensive. The hub bypasses this bureaucratic red tape by allowing participants to share their breakthroughs. If one jurisdiction develops a highly effective prompt for analyzing housing data, another can borrow and adapt that logic in a matter of days rather than months, significantly accelerating the timeline from conceptualization to deployment.

This model of collaborative innovation also plays a vital role in democratizing access to high-level technology for smaller jurisdictions that lack large technical staffs. Many mid-sized cities do not have the budget to employ a team of data scientists or AI engineers, which historically kept them on the sidelines of the digital revolution. By utilizing shared resources and peer-to-peer exchange, these agencies can leverage the collective expertise of the entire hub. This shift toward a shared technical infrastructure reduces the “vendor lock-in” that often plagues government IT departments and ensures that the power of modern technology is available to any agency willing to participate, regardless of its internal technical capacity.

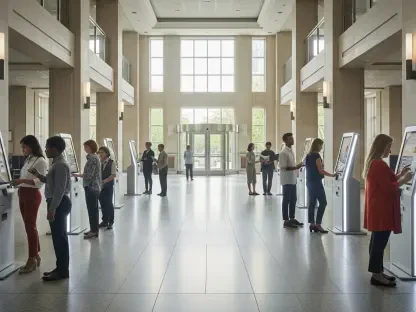

Case Study: San Antonio’s Data-Driven Workforce Development

The practical application of these collaborative tools is clearly visible in the recent efforts of the San Antonio Workforce Development Office. Tasked with managing the “Ready to Work” initiative—a massive $200 million program aimed at providing job training and education to over 15,000 residents—the office faced the challenge of scaling its services without losing the personal touch required for social work. To meet this demand, the city developed AI-powered chatbots designed to assist residents navigating the complexities of the program. These tools were not generic, off-the-shelf bots; they were trained by human staff to maintain a professional yet empathetic tone, ensuring that the technology served as an extension of the agency’s mission rather than a cold, automated gatekeeper.

Beyond resident communication, the San Antonio office leveraged the hub to implement sophisticated media monitoring and outreach strategies. By using AI to analyze geolocation data, officials were able to measure the real-world effectiveness of their marketing campaigns within specific, underserved ZIP codes. Lori Zamora, a leader within the department, emphasized the vision of maximizing the “efficiency of the dollar” through this precision marketing. Instead of broad, unfocused advertising, the city used data to identify exactly which neighborhoods were responding to outreach and which required more direct engagement. This data-driven approach allowed the agency to allocate its marketing budget with surgical precision, ensuring that the $200 million program reached those who needed it most.

Practical Frameworks for Reducing Administrative Burden and Error Rates

The broader goal of implementing AI in the public sector is the systematic reduction of administrative burden, which often acts as a friction point for both employees and residents. Automated tools are being deployed to handle the “tedious” tasks that consume hundreds of staff hours, such as processing invoices, verifying documentation, and cross-referencing eligibility requirements. By shifting the focus of human employees from these repetitive manual tasks to high-touch interactions, agencies can improve the quality of service while simultaneously reducing the rate of human error. This transition is particularly critical as agencies navigate new federal work requirements and complex compliance standards like HR 1, where the margin for error is increasingly thin.

As the initiative progresses, the roadmap for scaling AI involves moving from simple resident inquiries to the management of complex public benefit systems. Artificial intelligence can “plug gaps” in these systems by identifying residents who may be eligible for multiple services but are only enrolled in one, thereby creating a more holistic approach to social safety nets. Looking forward, the objective is to create a seamless integration where AI handles the backend logistics of contract management and data evaluation, while human professionals focus on the nuanced decisions that require empathy and ethical judgment. This balanced framework provides a sustainable path for government agencies to not only catch up with the digital age but to lead it with confidence and accountability.

The implementation of the AI Learning and Innovation Hub represented a significant turning point for many local government entities that previously felt excluded from the technological revolution. Administrators discovered that by utilizing a shared sandbox environment, they could mitigate the risks that traditionally stalled modernization efforts. These leaders successfully integrated automated tools into their daily operations, which allowed for a more efficient allocation of taxpayer funds and human resources. The program demonstrated that collaborative knowledge-sharing effectively dismantled the bureaucratic silos that often hindered progress in the public sector. Ultimately, the adoption of these modern frameworks provided a clear pathway for agencies to enhance their service delivery while maintaining the high standards of reliability required by the public. Professionals in the field recognized that the future of public service depended on the ability to combine machine-scale efficiency with human-centered policy design.