The legislative landscape surrounding artificial intelligence in Utah is currently undergoing a period of intense transformation as lawmakers attempt to balance a robust domestic effort to establish guardrails for rapidly evolving technology with significant pressure from federal authorities. While the state has historically been a hub for technological innovation, the current climate is defined by a delicate dance between local safety concerns and national economic interests. This friction has reached a boiling point as ambitious state-level proposals encounter resistance from the highest levels of the federal government, forcing a recalibration of how a single state can effectively govern a borderless digital frontier. The stakes are particularly high because the outcomes in Salt Lake City often serve as a bellwether for other states seeking to reclaim authority over the digital lives of their citizens. Consequently, the evolving regulatory environment is characterized by a strategic pivot, moving away from broad, sweeping mandates that might trigger federal preemption and toward more targeted, sector-specific measures. By focusing on immediate harms such as non-consensual deepfakes and the psychological safety of minors, Utah is attempting to construct a protective shield that respects federal boundaries while still providing the rigorous oversight that constituents are increasingly demanding from their representatives.

The Federal Stumbling Block and Jurisdictional Tension

At the heart of the current debate is a major legislative hurdle involving a proposal that would have required artificial intelligence chatbot developers to implement comprehensive safety plans before deploying their products to the public. This bill, which initially garnered significant momentum and even captured the attention of national figures and industry advocates, faced a sudden and decisive obstacle when the federal government issued a formal communication opposing its passage. Federal officials, operating under the current administration, labeled the state-level mandate as inherently unfixable, expressing deep concerns that such localized regulations could infringe upon critical national security interests or create a fragmented regulatory landscape. This opposition was rooted in the belief that imposing strict development-side requirements at the state level might inadvertently drive major technology firms to relocate their primary operations to international jurisdictions with more lenient frameworks, thereby undermining the competitive edge of the American tech sector. This intervention effectively stalled the bill on the House floor, forcing its proponents to acknowledge that the path to comprehensive algorithmic oversight is fraught with complex geopolitical and economic implications that transcend state lines.

This high-profile intervention has highlighted a growing jurisdictional divide between Utah’s state leadership and federal authorities regarding who possesses the ultimate right to regulate the digital sphere. Governor Spencer Cox has remained a vocal advocate for the principle that states have a primary, non-delegable responsibility to protect their citizens—particularly vulnerable populations like children—from the emerging harms associated with unvetted technological tools. In contrast, federal authorities have consistently emphasized their prerogative to oversee matters that intersect with interstate commerce and the broader national security infrastructure. This tension has created a legislative stalemate where broad safety measures are viewed as overreach by the federal government, yet seen as essential protections by local lawmakers. As a result, House leadership has opted to circle the broader safety plan legislation, allowing it to remain dormant while redirecting the state’s legislative energy toward narrower, consumer-focused protections that are less likely to trigger a direct confrontation with federal agencies. This shift represents a pragmatic recognition that while the state may not be able to dictate the foundational development of AI, it can still exert significant influence over how those technologies are utilized within its own borders.

Addressing the Risks of Companion Chatbots

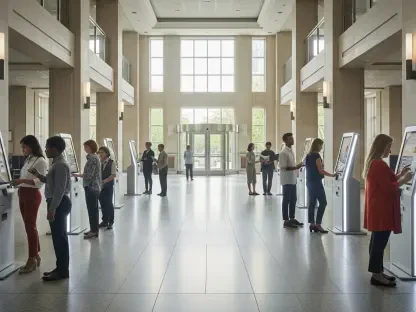

One of the most significant and culturally resonant measures still moving through the state legislature is the Companion Chatbot Safety Act, which specifically targets artificial intelligence designed to simulate human relationships and romance. Lawmakers were presented with startling data indicating that approximately seventy-two percent of teenagers have interacted with these types of bots, with more than half of that demographic engaging with them on a regular or daily basis. The bill is built on the foundation that prolonged and unchecked interaction with emotionally manipulative or highly persuasive AI can be profoundly detrimental to the developmental and psychological health of minors. Because these systems are often programmed to mirror human empathy and affection without the constraints of biological or social consequences, they can create a distorted sense of reality for young users. Proponents of the act argue that without clear state intervention, a generation of youth could become increasingly isolated from genuine human connection, preferring the curated and always-available validation of a digital algorithm. This concern has unified various factions within the state government, leading to a focused effort to establish clear boundaries for the companies that profit from these intimate digital interactions.

To mitigate these psychological risks, the proposed legislation includes several mandatory safeguards that platforms must integrate into their user experience for minor residents. For instance, chatbots would be required to provide persistent hourly reminders to minor users, explicitly stating that they are interacting with a non-human entity and encouraging them to take physical breaks from the digital interface. Additionally, the bill strictly prohibits the generation of any content that promotes self-harm, suicide, or illegal activities, ensuring that the algorithm does not inadvertently exacerbate a mental health crisis. Perhaps most importantly, the legislation requires these platforms to be programmed with sophisticated recognition tools that can identify signs of psychological distress or suicidal ideation in real-time. Once these signs are detected, the platform must provide immediate and prominent access to crisis intervention resources and professional support services. By shifting the regulatory focus from the underlying code to the specific user interface and safety outcomes, Utah is attempting to protect the well-being of its youngest residents without running afoul of federal concerns regarding the broader development of artificial intelligence technology.

Combatting Deepfakes and Protecting Digital Identity

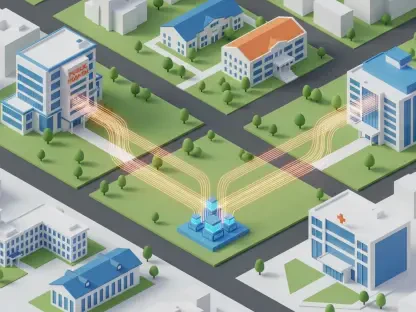

Utah is also taking an aggressive and proactive stance against the misuse of generative artificial intelligence to create non-consensual intimate imagery, an issue that has become a pervasive online threat. The Voyeurism Prevention Act seeks to codify the absolute necessity of consent in the digital age by requiring technology platforms to obtain explicit, documented permission before they can legally generate intimate images or videos of any individual. This legislation is a direct response to the rising tide of deepfake technology, which allows bad actors to superimpose a person’s likeness onto explicit content with alarming realism. The bill would also empower individuals with the right to revoke their consent at any time, providing a clear legal mechanism for people to reclaim control over their digital likeness and personal privacy. This move is intended to close the existing legal loopholes that have historically made it difficult for victims of digital exploitation to seek justice or have harmful content removed from the internet. By establishing that a person’s digital identity is an extension of their physical personhood, Utah is setting a new standard for how modern privacy laws should function in a world where reality can be easily synthesized by a computer.

Beyond the requirement for explicit consent, the proposal introduces a significant technical requirement for platforms to disclose provenance data for all AI-generated media. This metadata would act as a transparent digital history, showing exactly how an image or video has been altered or created, which is essential for restoring trust in digital communications. This requirement is designed to provide a clear path for accountability, making it much easier for law enforcement and judicial bodies to distinguish between authentic recordings and manufactured fabrications. The legislation builds upon existing laws that were established to protect a person’s name, image, and likeness, expanding those commercial protections into the more sensitive realm of private and intimate digital safety. By requiring this level of technical transparency, the state aims to discourage the creation of deceptive content while providing victims with the evidentiary tools needed to pursue legal action against those who weaponize AI for harassment or defamation. This dual approach of legal consent and technical disclosure represents a sophisticated attempt to govern the output of generative AI without attempting to halt the progress of the underlying technology itself.

Modernizing Defamation Laws for the AI Era

The legal ramifications of AI-generated content extend deep into the realm of reputation and individual identity rights, prompting the introduction of legislation that seeks to modernize defamation standards. This bill aims to clarify that existing defamation laws must apply to content created through artificial intelligence, addressing a critical gap where deepfakes can cause immense reputational harm that is often difficult to quantify in traditional financial terms. Senator Kirk Cullimore and other sponsors have pointed out that under current legal frameworks, victims often struggle to prove the specific dollar amount of damages caused by a digital fabrication, which can result in the dismissal of otherwise valid claims. To rectify this, the new legislation establishes a structured process for demonstrating damages, particularly if the defamatory AI content is not removed promptly following a formal legal notice from the victim. This ensures that the law prioritizes the restoration of truth and the protection of an individual’s public standing over the technicalities of economic loss. The goal is to provide a clear and accessible legal pathway for anyone whose life or career has been upended by the malicious use of synthetic media.

The Utah Legislature demonstrated a clear commitment to human agency by ensuring that the speed of digital dissemination did not outpace the legal system’s ability to provide recourse. Lawmakers prioritized the creation of a framework where the responsibility for digital harm shifted from the victim to the entities that enabled the creation and spread of defamatory material. This approach successfully signaled that the era of unregulated digital impersonation was coming to a close at the state level. In the end, the focus remained on providing actionable next steps for individuals to defend their identities in a non-commercial context. Future considerations will likely involve refined cooperation with neighboring states to ensure these protections remain effective even as content crosses state lines. By establishing a ten-day window for content removal and recognizing an exclusive right to one’s own identity, the state provided a blueprint for how personal dignity can be preserved in an increasingly automated world. These actions ensured that while technology continued to advance, the legal rights of individuals remained grounded in the fundamental principles of consent and accountability.