Virginia’s public school classrooms are no longer merely spaces for textbooks and lectures; instead, they have become high-tech laboratories where the rapid integration of artificial intelligence is fundamentally altering how the next generation processes information and develops critical thinking skills. The digital classroom has moved far beyond simple tablets and online textbooks, with over 85% of teachers and students now utilizing generative AI tools in their daily routines. Yet, as these applications become as common as calculators, Virginia lawmakers are raising flags of caution, questioning whether society is enhancing student intelligence or simply outsourcing it to an algorithm. With local legislation now hitting the floor in Richmond, the Commonwealth has emerged as a primary battleground for the soul of modern education.

The End of the Paper-and-Pencil Era: Virginia’s High-Stakes Bet on AI

The transition from traditional instruction to AI-assisted learning represents one of the most significant pedagogical shifts in a century. Educators once worried about the introduction of basic search engines, but the current wave of generative technology offers immediate, polished answers that bypass the traditional struggle of the writing process. In schools across Virginia, the presence of large language models has forced a re-evaluation of what it means to complete an assignment. While some view these tools as essential productivity boosters for a modern workforce, others see them as a shortcut that threatens to decouple effort from achievement.

The stakes in this legislative session are remarkably high because they involve more than just technology policy; they involve the developmental trajectory of millions of students. Lawmakers are currently tasked with defining the boundaries of “acceptable use” at a time when the technology itself is evolving faster than the bureaucratic processes designed to manage it. This tension has created a sense of urgency, as the Commonwealth seeks to balance the undeniable benefits of innovation with the necessity of preserving the fundamental human elements of the learning experience.

From Innovation to Cognitive Crisis: Why New Rules Are Surfacing

The push for regulation is fueled by a growing body of research suggesting that the “digital-native” generation is facing a unique learning hurdle. Neuroscientists have observed that excessive screen time—now averaging up to eight hours daily for youth—is linked to a measurable decline in memory, literacy, and general IQ. This “creativity and learning crisis” has transformed AI from a futuristic luxury into a source of immediate concern for state leaders. There is a palpable fear that traditional learning methods are being displaced by effortless, automated interfaces, leading to a generation that struggles with deep focus and information retention.

Beyond the cognitive impact, the surge in AI usage has sparked concerns about the erosion of student self-reliance. When an algorithm can draft an essay or solve a complex calculus problem in seconds, the internal cognitive labor required to master those subjects begins to atrophy. Virginia’s move toward regulation is an attempt to intervene before these habits become permanent. The goal is to ensure that technology serves as a scaffold for learning rather than a replacement for it, maintaining the intellectual rigor that a public education is intended to provide.

The Legislative Blueprint: Proposed Guardrails for the Commonwealth

Virginia’s approach to AI is not a monolith; it is a multi-pronged strategy designed to balance experimentation with strict oversight. Senator Stella Pekarsky’s proposal, Senate Bill 394, envisions a controlled environment running through 2030, where the Board of Education establishes formal “safe, ethical, and equitable” standards for AI use. This pilot program approach allows districts to test the waters of integration while remaining under the watchful eye of state regulators who can adjust policies based on real-world outcomes and student performance data.

In contrast, House Bill 1186, proposed by Delegate Sam Rasoul, takes a more cautious path by seeking to prohibit the use of AI chatbots for direct instructional tasks. This legislation stems from concerns that students might form unintended emotional attachments to software or inadvertently share sensitive private data with third-party developers. Furthermore, companion bills like SB 568 and HB 1486 focus on the digital health connection, aiming to limit overall screen time and educate students on the addictive nature of digital devices. Together, these bills represent a comprehensive effort to protect the physical and psychological well-being of the student body.

Expert Perspectives: The Human Element vs. Machine Efficiency

The debate in Richmond is heavily influenced by voices from the front lines of academia and neuroscience. A survey of over 1,000 higher education faculty members revealed that 90% believe AI will diminish critical thinking, while 83% fear it will further erode already thinning attention spans. These experts argue that the efficiency of AI comes at a hidden cost: the loss of the “productive struggle” that is essential for deep learning. When the path to an answer is too smooth, the brain does not build the durable neural pathways required for long-term mastery.

Case studies like Alpha Schools illustrate the radical extreme of this trend, where AI replaces traditional teachers for core subjects in a “two-hour learning model.” This sparked a vigorous debate on whether education should be a solitary digital experience or a social human one. Experts like Eddie Watson argue that Virginia has reached an “inflection point” where the goal must be to ensure human judgment remains the centerpiece of the classroom. The consensus among many thought leaders is that intentional leadership is required to prevent students from becoming over-reliant on generative tools at the expense of their own cognitive development.

Practical Strategies for Navigating the AI Frontier in Education

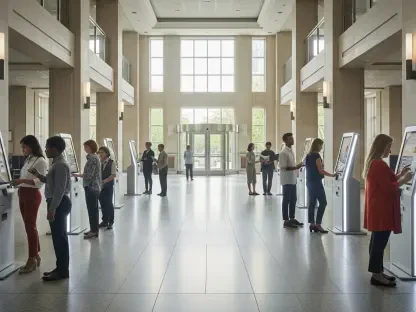

As Virginia lawmakers moved toward their legislative deadlines, school districts adopted specific frameworks to prepare for these new regulations. They prioritized human-led instruction by positioning AI as a supplemental research partner rather than a primary source for final assignments. This shift ensured that the core of every project remained rooted in student inquiry. Furthermore, the implementation of ethical usage training became a standard part of the curriculum, teaching students the vital difference between using technology for productivity and using it as a shortcut that bypassed necessary cognitive labor.

School boards also established rigorous data privacy protocols to protect student information from being harvested by external developers. They shifted grading rubrics to value the process of learning—such as drafts, oral defenses, and live demonstrations—over the final product, which was more easily replicated by automated tools. By focusing on these actionable steps, the Commonwealth sought to create a resilient educational environment. These measures ultimately aimed to foster a generation that viewed AI as a tool to be mastered rather than a master to be followed, securing the intellectual independence of students for years to come.