The rapid proliferation of artificial intelligence within local government offices has reached a critical juncture where automated interfaces now serve as the primary gatekeepers of civic information for millions of residents. As cities transition from traditional static websites to interactive “AI assistants,” the shift is not merely a technical upgrade but a fundamental redesign of how the state communicates its power and priorities. By 2026, the success of these systems is no longer measured solely by their uptime or response speed, but by their ability to navigate the complex, often contentious, realities of public policy without falling into the traps of oversimplification or hidden bias.

Introduction to Municipal AI Systems and Public Policy

Modern municipal AI systems represent a sophisticated layer of “civic middleware” designed to bridge the gap between fragmented government databases and the lived experience of the populace. At their core, these systems utilize large language models to parse natural language queries, aiming to provide immediate, conversational answers to everything from trash collection schedules to nuanced inquiries about zoning laws. This evolution emerged from a desperate need to reduce the administrative burden on understaffed city departments while meeting a growing public expectation for digital-first service delivery.

The relevance of this technology extends far beyond simple convenience; it is a test of digital democracy. When a resident interacts with a municipal chatbot, they are engaging with a programmed representative of the city’s executive branch. This context necessitates a higher standard of accuracy and neutrality than a commercial chatbot. The challenge lies in ensuring that these systems do not become opaque “black boxes” that prioritize administrative efficiency over the transparency and accountability essential to public trust in a functioning society.

Core Functional Components and Deployment Models

Data Retrieval and Logic Architectures

The effectiveness of a municipal chatbot depends heavily on its underlying Retrieval-Augmented Generation (RAG) architecture, which dictates how the AI pulls information from official city records. Unlike general-purpose models that rely on broad internet data, these systems are theoretically tethered to “single sources of truth” such as municipal codes and official press releases. However, the logic used to filter this data often reveals a hidden editorial hand. For instance, Denver’s “Sunny” system excels at delivering hard data on crime statistics but struggles when faced with broader social questions, often defaulting to a service-request logic that treats complex human issues as mere logistical tickets.

This architectural choice is significant because it defines the boundary between a tool that informs and a tool that manages. When the retrieval logic is tuned too narrowly, the system fails to recognize the context of a query. If a resident asks about the causes of homelessness and receives a link to report a sidewalk encumbrance, the technology has made a silent policy decision to frame a social crisis as a nuisance. This highlights the unique requirement for municipal AI to possess not just data, but a sophisticated understanding of civic context that competitors in the private sector rarely need to master.

User Interface and Interaction Personas

The front-end design of these systems often employs “interaction personas” like Atlanta’s “Ava” to humanize the digital experience and lower the barrier to entry for non-technical users. These interfaces are meant to provide a seamless, intuitive experience that mimics a conversation with a knowledgeable city clerk. In practice, however, the persona often masks a lack of technical depth. In several cases, these interfaces have been revealed as little more than rebranded keyword search engines that provide a deluge of irrelevant links, such as offering court forms in response to questions about police accountability, which obscures the very information the user seeks.

What makes this implementation unique compared to private-sector alternatives is the high stakes of a failed interaction. While a retail chatbot’s error might result in a lost sale, a municipal chatbot’s failure can lead to the denial of essential services or the spread of misinformation regarding legal rights. The performance of these interfaces suggests that many cities have prioritized the “look” of innovation over the functional reality of AI, leading to a disconnect where the user feels heard by a persona but is ultimately left unserved by the underlying technology.

Emerging Trends in Civic Automation and AI Integration

The latest shifts in the industry show a move toward “proactive governance,” where chatbots are integrated with real-time sensor data and administrative workflows to predict resident needs. We are seeing a transition from reactive systems that wait for a question to proactive systems that alert residents about hyper-local issues, such as sinkhole risks in Winter Haven or air quality shifts in specific neighborhoods. This trend is driven by a desire to turn the chatbot from a passive librarian into an active municipal concierge that can initiate workflows without human intervention.

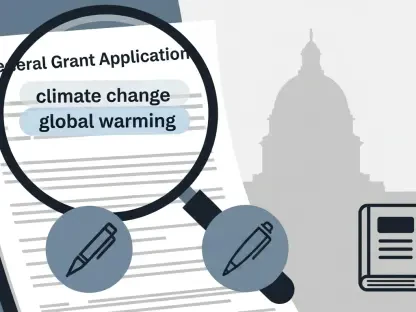

Moreover, there is an increasing industry-wide emphasis on “explainable AI” within the civic sphere. Municipalities are beginning to demand that vendors provide audit trails for how a chatbot reached a specific answer on sensitive topics. This shift is a response to the growing awareness that AI is never truly neutral. By incorporating transparency layers, cities hope to regain control over the narratives their automated systems are constructing, ensuring that the AI’s “opinion” on contested issues like housing assistance aligns with the actual legislative intent of the city council.

Real-World Applications and Sectoral Implementations

In the housing and social services sectors, AI chatbots are being deployed to navigate the labyrinth of eligibility requirements for assistance programs. These systems act as a 24/7 intake office, helping residents determine if they qualify for subsidies before they ever speak to a caseworker. This application is particularly valuable in high-density urban areas where the volume of inquiries far exceeds human capacity. By automating the initial screening process, cities can ensure that human resources are reserved for the most complex cases that require empathy and nuanced judgment.

Public safety is another area seeing significant, albeit controversial, deployment. Some cities use AI to provide real-time updates on crime trends or to explain complex law enforcement procedures to the public. However, these implementations often show a bias toward enforcement data over community-based solutions. While a system might provide precise data on “cleared” cases or campsite removals, it may remain silent on the efficacy of diversion programs. This selective implementation highlights how the technology can be used to reinforce specific administrative successes while omitting data that might challenge the status quo.

Technical Limitations and Regulatory Challenges

The primary technical hurdle facing municipal AI is the lack of “technological authenticity” in the procurement process. Many cities are currently paying for legacy search tools that have been deceptively marketed as advanced generative AI. This creates a performance gap where the system cannot actually synthesize information but merely points to a pile of documents. Furthermore, the reliance on proprietary third-party apps for access, as seen in cities like Phoenix and Detroit, creates a significant barrier to independent oversight. If a chatbot is locked inside a specific mobile application, journalists and researchers cannot easily audit its outputs for bias or inaccuracy.

Regulatory challenges also loom large as states begin to consider “algorithmic accountability” laws that would hold cities liable for the advice given by their AI. There is currently no unified standard for how a city should “stress-test” a chatbot before launch. Without a rigorous framework that includes testing on contentious social issues, cities risk deploying systems that inadvertently spread misinformation or reinforce systemic biases. The ongoing development of “sandboxed” testing environments is an attempt to mitigate these risks, but the pace of regulatory oversight still lags behind the speed of technological adoption.

Future Trajectory of Automated Governance

The path forward for municipal automation will likely involve a move away from standalone chatbots toward integrated “civic operating systems.” In this future, the AI will not just talk; it will act. We can anticipate breakthroughs in cross-departmental integration where a single query about a building permit automatically triggers checks across zoning, environmental, and historical preservation databases simultaneously. This would transform the chatbot from a simple interface into a powerful orchestration layer that flattens the city’s hierarchical silos and delivers a truly unified government response.

Long-term, this could lead to a fundamental shift in the role of city staff, moving them from clerical roles into “AI oversight” positions. The focus will move from answering repetitive questions to fine-tuning the ethical and policy parameters that govern the automated systems. As AI becomes more embedded in the infrastructure of the city, the distinction between “digital services” and “government” may vanish entirely, making the governance of these algorithms the most important policy challenge of the next decade.

Assessment of Municipal AI Maturity and Impact

The initial wave of municipal AI deployment was marked by an over-enthusiastic rush toward innovation that often overlooked the basic tenets of public accountability. While these systems demonstrated an impressive ability to handle routine logistical tasks, they frequently stumbled when navigating the complex social fabric of the communities they serve. The lack of editorial oversight and the reliance on “black box” configurations meant that many cities inadvertently delegated policy-making to software vendors, resulting in systems that were efficient but often lacked the nuance required for democratic engagement.

Moving forward, the focus must shift from technical deployment to rigorous administrative governance. City leaders should treat AI chatbots as official spokespeople, subjecting their outputs to the same level of scrutiny as a press release or a policy memo. Ensuring web-based accessibility, verifying the authenticity of the technology, and conducting deep-dive audits of how systems handle controversial topics are no longer optional steps. By taking full responsibility for the “voice” of their machines, municipalities can ensure that AI serves as a bridge to a more transparent government rather than a wall that hides the inner workings of the state from its citizens.