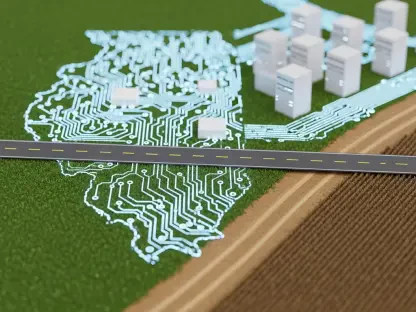

China’s new plan for the Internet of Things reframed progress not as a race to add endpoints but as a systemic rebuild that fuses devices, networks, data, and AI into one coordinated fabric capable of making and executing decisions at scale across factories, streets, warehouses, and homes with measurable returns. That pivot rested on a clear distinction: raw connectivity was necessary, but the next tranche of value would come from “intelligent operation of all things,” where terminals and edges no longer waited for instructions from distant clouds but collaborated with them, closing loops from sensing to actuation in real time. The policy outlined headline targets—tens of billions of connections and a core industry above 3.5 trillion yuan by 2028—yet it insisted the real work sat deeper, in operating systems, multi-network integration, unified data, and an AI stack redesigned for terminals and edges. This was not a single-technology bet; it was an architectural stance with guardrails, rewarding those who could prove return on investment through throughput gains, downtime cuts, energy savings, and safety improvements that could be verified and audited.

Policy Context and Core Thesis

From Connections to Intelligence

The policy elevated IoT from a patchwork of vendor-specific modules and gateways into a national infrastructure guided by an AI-led execution layer. That shift redirected incentives: instead of celebrating device counts or module shipments, the plan prioritized end-to-end loops that start with full-domain sensing and end with verifiable actions—tuning a line, rerouting a vehicle, adjusting a building chiller—without round-tripping every decision to cloud. To make this feasible, the blueprint pushed intelligence into the terminal–edge–cloud collaboration pattern. Lightweight large models and agents would live on industrial controllers, cameras, and gateways, while the cloud coordinated learning, governance, and fleet management. This approach responded to practical bottlenecks seen in earlier deployments: silos that trapped data, cloud dependence that added latency and cost, and disjointed standards that undermined scale. By embedding autonomy at the edges, the strategy targeted resilient operations in factories, logistics hubs, and city systems where milliseconds and watts matter.

IoT as National Infrastructure

Positioned within new-type industrialization, the plan defined next-generation infrastructure around four traits with operational meaning. Full-domain sensing expanded what terminals can perceive, from high-resolution vision and micro–nano displacement to flexible tactile and inertial signals, so models could form a truer picture of the world. Ubiquitous intelligent interconnection promised seamless data paths, but with intelligence placed where it best fits: local on-device control for immediacy, edge aggregation for coordination, and cloud oversight for optimization. Multi-network integration accepted heterogeneity as a feature, not a flaw, combining NB-IoT, LTE-Cat 1, and 5G RedCap with gigabit optical and WLAN to match the right pipe to the right workload. Security and reliability scaled with identity and governance, using IPv6-by-default, standardized metadata—“one object, one code, one number, one data”—and distributed digital identity to harden provenance and access. Taken together, the stance recast IoT as an engineered system with performance, safety, and economics measured in production metrics, not lab demos.

Architecture and Networks

Terminal–Edge–Cloud as the System Backbone

The plan’s backbone was a unified terminal–edge–cloud platform that aggregated computing power and normalized data flows across tiers, using shared services for device onboarding, heterogeneous data access, and integrated governance. Compatibility mattered: terminals could connect over gigabit optical backbones, 4G including LTE-Cat 1, NB-IoT for massive low-power reach, and 5G including RedCap for low-latency resilience at lower device costs. On top, an application layer offered cross-platform development kits, reducing friction for integrators who build once and deploy across factories, warehouses, and municipal systems. This architecture encouraged a split of concerns: terminals handled immediate inference and control, edges synchronized across clusters and handled heavier fusion jobs, and clouds trained models, orchestrated fleets, and enforced policy. For instance, an industrial camera at a stamping line could detect surface defects locally via a quantized model, send features to an edge node for lot-level analytics, and reserve cloud compute for periodic model refreshes validated against high-quality datasets.

High–Low Network Mix for Cost–Performance Balance

Instead of insisting on top-end 5G everywhere, the policy endorsed a practical stack. NB-IoT remained the workhorse for hard-to-reach, low-throughput endpoints—pipeline sensors, water meters in dense basements, remote e-ink displays—delivering multi-year battery life and deep coverage. LTE-Cat 1 served mid- and low-speed, cost-sensitive devices that need reliable two-way communications and frequent interactions, such as handhelds, point-of-sale terminals, and many industrial controllers. 5G RedCap provided reduced-capability 5G that retained key 5G traits like low latency and high reliability while easing bill of materials and power budgets, suiting machine vision, mobile robots, and vehicular terminals. Converged with wired Ethernet and WLAN, the mix supported fixed–mobile and wide–narrow combinations so a factory could backhaul machine data over fiber, coordinate robots on RedCap, and scatter NB-IoT for ambient environmental sensing. Cost, coverage, and latency were tuned per scenario, with network slicing and QoS policies managing critical traffic without overprovisioning.

OS Unification to Fight Fragmentation

A fragmented OS landscape had long slowed IoT rollouts by forcing bespoke integrations and limiting cross-vendor portability. The plan countered with R&D into microkernel designs, virtualization, and strong security architectures to converge on open-source, energy-efficient, customizable IoT operating systems. Microkernels offered isolation and deterministic behavior, crucial for mixed-criticality workloads where a perception task must not starve a safety loop. Virtualization enabled secure multi-tenant edges in brownfield sites, letting a logistics operator host vision analytics, access control, and energy management on the same gateway with hard resource boundaries. A converged OS layer also meant better toolchains: standardized drivers for high-end sensors, unified update frameworks that can roll out quantized models safely, and attestation that proves firmware and runtime integrity. For developers, this trimmed time-to-field. For operators, it reduced lifecycle risk via predictable updates and security patches. Compatibility with mainstream ecosystems—such as OpenHarmony derivatives, Linux-based distributions, and real-time OS kernels—was part of a migration path that respected existing assets while steering toward common stacks.

AI, Sensing, and Embodied Intelligence

Smarter Terminals, Not “Blind Antennas”

The document cast terminals as intelligent actors rather than passive data forwarders. By integrating AI with 5G, human–machine interfaces, and edge computing, terminals gained three upgraded abilities. First, accurate local analysis: on-device inference against streaming sensor inputs enabled real-time control, like a servo adjusting feed rates within a few milliseconds after detecting chatter via vibration spectra. Second, intelligent decision-making: embedded agents applied rules and learned policies to act safely without constant cloud calls, such as a smart breaker preemptively isolating a line segment when harmonics exceed thresholds. Third, convenient interaction: context-aware interfaces that adapt to users and environments, from voice-guided maintenance terminals in noisy workshops to gesture-aware robots that yield to nearby workers. This triad supported mixed autonomy: terminals decide within envelopes, edges arbitrate across teams, and clouds refine strategies. Latency dropped, bandwidth demands eased, and privacy improved because fewer raw signals left the premises.

Upgraded Sensing as the New Differentiator

The plan underscored sensing as the substrate for reliable autonomy. Passive IoT extended reach with energy harvesting and ultra-low-power tags, enabling status checks where wiring is impractical. Multi-modal perception stitched together visual, acoustic, tactile, inertial, and environmental signals so agents could disambiguate tricky scenes—steam clouds on a line, reflective packaging in warehouses, or rain-speckled lenses in outdoor sites. High-precision positioning, including RTK and UWB, combined with swarm-aware sensing to let fleets of automated guided vehicles coordinate in dense aisles without collisions. Investment in mid- to high-end sensors—micro–nano displacement transducers for sub-micrometer motion, flexible tactile arrays for grippers, high dynamic range cameras for weld inspection, low-drift IMUs for mobile platforms, and calibrated gas sensors for leak detection—moved performance bottlenecks from hardware to algorithms. Paired with calibration pipelines and time-synchronization (PTP/IEEE 1588), fusion stacks could align events with microsecond accuracy, empowering agents to reason about cause and effect in dynamic environments and to validate actions against safety constraints.

Lightweight Large Models and Agentic Systems

A decisive theme was the “descent” of large models to terminals and edges in lightweight form. Rather than confining multimodal or code-capable models to data centers, the plan called for versions with hundreds of millions to low billions of parameters to inhabit industrial arms, edge controllers, intelligent gateways, cameras, vehicles, and home robots. Techniques such as post-training quantization to 8- or 4-bit, structured pruning, knowledge distillation, and sparsity-aware compilers reduced footprints while preserving accuracy where it counts. In a stamping plant, an edge controller could host a distilled vision-language model to flag atypical defects and recommend corrective actions, with deterministic runtimes enforced by real-time schedulers. Gateways in logistics hubs could execute policy enforcement at the perimeter—denying pallet exits when weight, barcode, and camera evidence disagree—without cloud calls. Consumer devices, like high-end cleaning robots, ran room-understanding models locally, negotiating around pets and cords with privacy intact. Across tiers, feedback loops closed the learning cycle: terminals logged edge cases, edges curated difficult samples, and clouds retrained models against high-quality datasets before rolling updates with canary releases and rollback safety.

Value Chain and Business Models

Profit Shifts to the Intelligent Execution Layer

As module and connectivity margins compressed, profits gravitated toward the layer that converts perception into outcomes. The policy’s emphasis on closed loops—perception–connection–data–model–execution—aligned revenue with ROI metrics: yield uplift, mean-time-between-failure increases, unplanned downtime reductions, kilowatt-hours saved per shift, and incident rates lowered. Providers that bundle systems, not parts, gained leverage. In discrete manufacturing, for instance, a solution that fuses high-resolution vision, tactile sensing on grippers, and an on-device anomaly detector could reduce scrap by measurable percentages, justifying subscription pricing tied to savings. In energy management, edge-based model predictive control trimmed chiller loads during peak hours, with verified savings reported through metered baselines. Logistics saw robots and handhelds coordinating via RedCap and WLAN, where autonomous slotting cut travel distances and queue times, translating into throughput guarantees in service-level agreements. The plan favored contracts where performance was observable and auditable, displacing one-off integration fees with recurring operation revenues anchored to concrete outcomes.

Who Gains in the New Stack

Four groups stood to benefit most from the shift. Terminal-side AI chip and edge hardware makers answered the co-design challenge with low-power accelerators, high-bandwidth memory hierarchies, and compression-aware toolchains that make 4-bit inference feasible on gateways and controllers. Vendors that support major runtimes—ONNX, TensorRT-like engines, and TVM derivatives—plus secure boot and remote attestation gained preference in procurement. Vertical service providers built industry-specific models and agents, since safety-critical tasks in chemicals, oilfields, or rail demanded precision that generic models could not guarantee. Their assets included libraries of validated patterns, data-cleaning pipelines, and monitoring that detects model drift in production. High-end sensor firms that deliver visual HDR sensors, low-noise IMUs, UWB anchors, and flexible tactile arrays—paired with calibration and synchronization kits—moved from “component suppliers” to “system performance enablers.” Finally, system-type enterprises integrated solution + product + service in industry, and solution + construction + operation in cities, absorbing responsibility for outcomes over the lifecycle through CIM platforms and digital twins that track assets, work orders, and policy compliance.

Priority Scenarios Across Sectors

Industrial deployments clustered around lines and cells where closed-loop control could be verified. Edge controllers hosted on-device anomaly detectors for bearings and cutters; collaborative robots used tactile feedback to avoid part bruising; and computer vision on welds, paint, or stamping spots flagged defects early, feeding upstream tuning to cut rework. In logistics, handhelds on LTE-Cat 1 coordinated with mobile robots on RedCap; gateways fused barcode scans, weight checks, and camera analytics to prevent missorts; and yards used UWB plus computer vision for autonomous slotting, lowering dwell time. Urban governance scenarios focused on environmental monitoring with passive IoT, risk alerts tied to storm drains and bridges, and emergency linking across utilities and traffic control. CIM and digital twins governed lifecycle operations—permitting, construction, inspection, and maintenance—with IPv6 identities mapping physical assets to virtual counterparts. At home, cleaning robots and hubs provided on-device services that respect privacy, recognizing rooms and routines locally and syncing summaries, not raw feeds, to cloud. Across these domains, the consistent thread was agentic behavior that acts within guardrails and proves its worth in numbers the owner already tracks.

Implementation Rails and Governance

Compute–Power Co-Optimization

Edge AI at scale lived or died by power and thermal ceilings, so the plan insisted on co-design from chip to scheduler. At the silicon layer, vendors advanced heterogeneous SoCs—CPU clusters for control, DSPs for signal processing, NPUs for dense linear algebra—with memory hierarchies tuned for streaming sensors and small-batch inference. On the model side, compression, quantization, pruning, and distillation produced binaries that fit edge budgets without catastrophic accuracy loss, aided by calibration datasets that reflect real sensor noise. Inference frameworks needed deterministic runtimes; kernels mapped to specific accelerators; and isolation via microkernels and lightweight virtualization ensured a misbehaving vision task could not stall a safety PLC loop. Scheduling spread work across terminal, edge, and cloud, balancing latency, privacy, and global optimization—e.g., a robot runs its policy locally, the edge computes multi-agent path plans, and the cloud optimizes yard-wide throughput nightly. This stack also considered maintainability: over-the-air updates with A/B partitions, secure enclaves for keys, and observability hooks that report latency, power draw, and model confidence for ongoing tuning.

Data Standards and High-Quality Datasets

Breaking silos required unified data models that map telemetry, events, and identities across brands and platforms. The plan called for standardized schemas and ontologies so a vibration reading or visual defect label meant the same thing from different vendors. It paired this with duties for data classification and grading, identifying and reporting important data, and governed sharing that kept flows secure while still useful. High-quality datasets were positioned as the “ammunition” for robust agents: curated, timely, and labeled with integrity, accuracy, and coverage that match the task. For an automotive plant, that might mean pixel-wise weld annotations under varied glare; for pipelines, vibration signatures labeled across bearing wear stages; for cities, synchronized weather, flood sensor, and traffic data with ground truth from incident logs. Tooling mattered too: data versioning that respects privacy, synthetic data generation for rare events, and validation harnesses to test models before deployment. These practices turned ad hoc analytics into engineering, raising confidence that agents behave as expected in the messy, shifting conditions of the physical world.

Identity, Security, and Path to Assetization

At the scale of billions of devices, unique and verifiable identity was not optional. The plan accelerated IPv6 adoption and asked new application terminals to default to IPv6, enabling globally unique addresses and cleaner network policies. “One object, one code, one number, one data” tied physical items to consistent identification and management metadata, improving auditability and lifecycle tracking. Network security identification management and links to national identification and resolution systems tightened admission control, making it easier to quarantine compromised nodes. Beyond basic identity, a blockchain-based distributed digital identity service added tamper resistance to device credentials and event logs, helping operators and regulators confirm provenance across organizational boundaries. Combined with standardized data governance, this created a path to assetization: device-generated data, with stable identity and confirmed lineage, could become tradable under policy oversight—think energy-saving certificates verified by metered baselines, or quality datasets shared in industry consortiums under usage controls. Security frameworks anchored this market: secure boot, attestation, encrypted storage, and continuous monitoring to detect anomalies in behavior, model drift, or attempted exfiltration before they escalate.

Practical Next Moves for Stakeholders

Enterprises considering alignment with the plan could start with an inventory of where edge autonomy would pay back quickly—stations with chronic bottlenecks, assets that drive most downtime, or city intersections with recurrent incidents—and scope pilots that tie outcomes to KPIs. Hardware teams should shortlist gateways and controllers that support quantized inference, microkernel isolation, and attestation, then validate thermal and power headroom under worst-case loads. Data leads can converge schemas across vendors, stand up labeling workflows, and capture synchronized sensor streams, seeding high-quality datasets tied to clear validation metrics. AI teams should nominate candidate models for descent, budget for compression and real-time constraints, and define canary and rollback procedures. Network architects can map workloads to the high–low mix—NB-IoT for ambient sensing, LTE-Cat 1 for handhelds and controllers, RedCap for mobile vision—and align QoS and slicing policies. Finally, legal and security teams can operationalize identity and governance: IPv6-by-default, “one object, one code, one number, one data” in asset registries, distributed identity where cross-boundary trust is needed, and continuous compliance monitoring against data-classification rules. This layered approach turned the policy from aspiration into deployable systems with verifiable returns.